Pixel-only tracking breaks down fast once deals move through a CRM and revenue changes after the initial sale. The fix is custom API development and integration that turns CRM lifecycle changes (lead, MQL, SQL, won, refund) into reliable server-side conversion events for Meta CAPI and Google Enhanced Conversions. This post is for marketing ops, RevOps, founders and technical teams who want accurate attribution without duplicate purchases and misattributed revenue.

Quick summary:

- Use a canonical event record (event_id + order_id) so retries and replays never double-count conversions.

- Map CRM lifecycle stages to platform-specific event names and adjustment flows including refunds and restatements.

- Resolve identity with normalized and hashed email and phone plus click IDs when available.

- Gate every outbound send by consent status and store an audit trail for compliance and debugging.

- Harden delivery with batching, partial-failure handling, dead-letter routing and replay-safe processing.

Quick start

- Define your canonical event schema and generate deterministic event_id values per lifecycle change.

- Stand up an event store (table or queue-backed journal) and record events before calling Meta or Google.

- Implement an event mapping layer for Meta CAPI and Google Enhanced Conversions plus Google adjustments for refunds.

- Add identity normalization and hashing (email and phone) and attach click IDs (gclid and fbp/fbc) when you have them.

- Ship delivery workers with retries, idempotency checks and a dead-letter queue then validate dedupe in platform tooling.

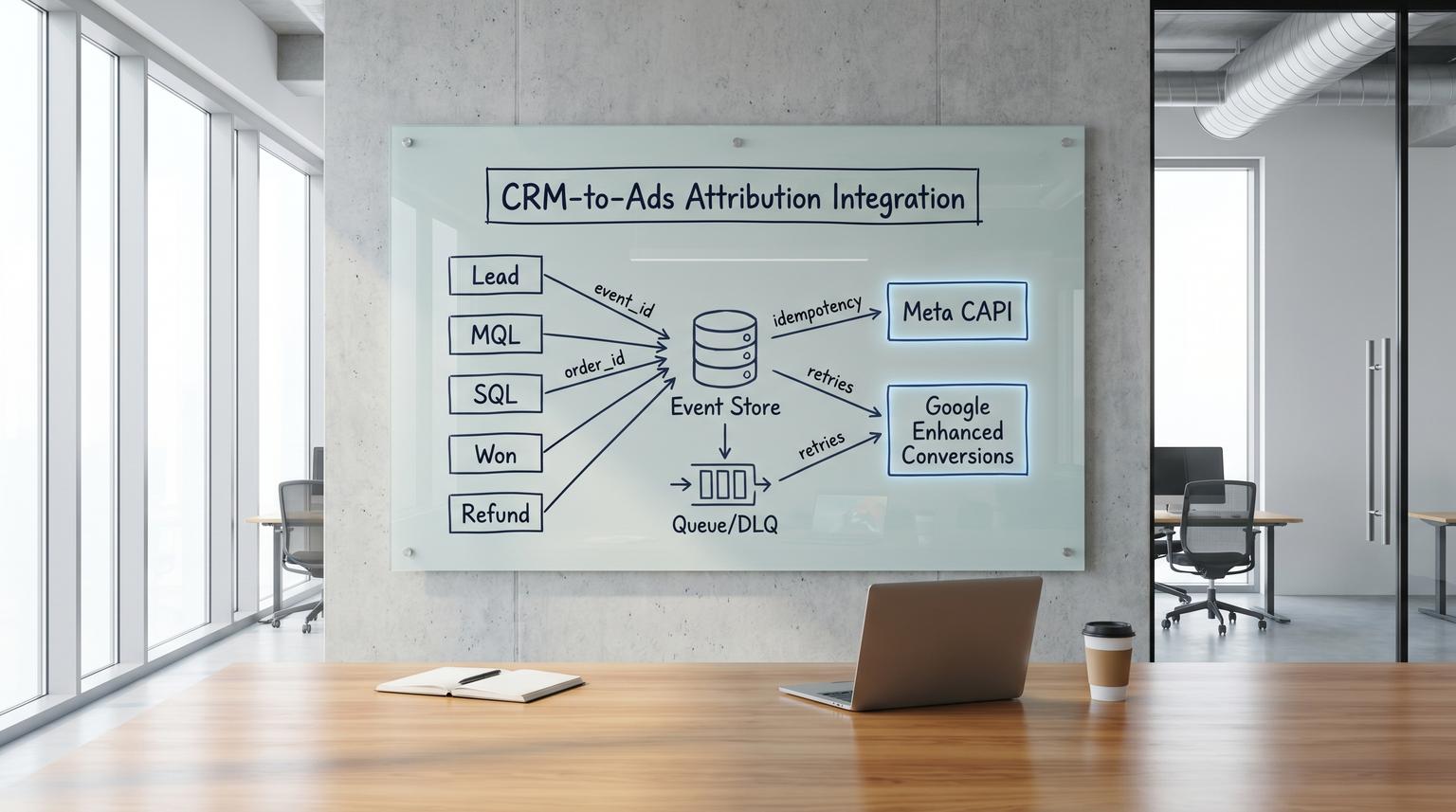

A production-grade CRM to ads bridge works by recording each lifecycle change as a single canonical event with a stable event_id and order_id then delivering that event to Meta CAPI and Google Enhanced Conversions through an idempotent pipeline. Identity is resolved using normalized hashed PII and click IDs where available and every send is consent-gated. Retries preserve the same identifiers and failures are routed to a dead-letter queue for safe replay so attribution stays accurate without double-counted revenue.

The real attribution failure is duplicates not missing pixels

Most teams notice tracking failures when numbers drop. The more expensive failure shows up when numbers look fine but are wrong: duplicated purchases, late events applied twice and refunds never subtracted. The result is ROAS drift. You keep spending because the dashboard says a campaign is profitable even though revenue was double-counted or credited to the wrong channel.

Server-side conversion APIs help but only if you treat them like an event pipeline, not a one-off webhook. Two patterns drive most problems we see in the wild:

- Retry duplication: a timeout occurs and the sender generates a new ID on retry which creates a second purchase.

- Stage confusion: CRM stages are remapped or renamed and the integration starts sending the same business outcome under different event names which bypasses dedupe rules.

The solution is a canonical idempotent event model and a mapping layer that is explicit about what gets sent where and how adjustments are applied when revenue changes.

End-to-end data flow and where deduplication actually happens

Here is a concrete flow you can implement with n8n, a lightweight API service or both. The key is the event store in the middle which becomes your audit log and replay source of truth.

[CRM] (Lead/MQL/SQL/Won/Refund)

| 1) lifecycle change webhook or polling

v

[Ingestion API]

| 2) validate + normalize + consent lookup

v

[Canonical Event Store]

| 3) write event record (idempotent upsert)

| 4) enqueue delivery jobs

v

[Queue / Job Runner]

| 5) delivery worker with retries

| - Meta CAPI sender

| - Google Enhanced Conversions sender

| - Google Adjustments sender (refunds)

v

[Meta CAPI] [Google Ads API]

| 6) record result | 6) partial failure details

v v

[Delivery Log + Metrics + DLQ]

| 7) replay from DLQ after fix

v

[Reconciliation Reports]

Deduplication is not one mechanism. You should expect to handle it in three places:

- Your own pipeline: idempotency key prevents duplicates caused by retries and replays.

- Meta: dedupe is primarily based on event_name + event_id and it only works if you keep them stable across channels and retries. See a concise breakdown at Meta CAPI deduplication with event_id.

- Google: Enhanced Conversions for web uses order_id as the join key for later uploads of hashed identifiers. That makes order_id a non-negotiable field for won and for many adjustment flows. Reference: Enhanced conversions for web setup.

The canonical idempotent event model you should build around

Your bridge should treat every lifecycle change as an immutable business event. Downstream delivery can be retried, delayed and replayed but the event record stays stable and auditable. If you want a deeper, repeatable methodology for reliability patterns like idempotency, retries, DLQs and rate-limit handling, use our pillar guide: API integration engineering playbook.

Minimum event schema

| Field | Type | Why it matters |

|---|---|---|

| event_id | string | Idempotency key. Stable across retries. Used by Meta dedupe when paired with event_name. |

| source | string | Where it came from, for example hubspot, salesforce, custom_crm. |

| event_name | string | Canonical lifecycle name, for example lead, mql, sql, won, refund. |

| occurred_at | ISO-8601 timestamp | Business time. Should not change on retries. Used for ordering and platform windows. |

| entity_type | string | lead, contact, opportunity, order. |

| entity_id | string | CRM ID of the entity that changed. |

| order_id | string or null | Required for revenue events and for Google Enhanced Conversions join logic and many adjustment requests. |

| value | number or null | Revenue value for won or restatement. Keep currency alongside it. |

| currency | string or null | Meta purchase requires value and currency. Store both even if one platform is primary. |

| identity | object | Normalized raw PII in-memory then stored as hashed values plus click IDs when allowed. |

| consent_status | string | granted, denied, unknown plus the consent basis for audit. |

| attempts | integer | Retry counter across deliveries per destination. |

| destinations | object | Status per platform, for example meta: sent/failed, google: sent/failed. |

| next_retry_at | timestamp or null | Backoff scheduling for rate limits and transient errors. |

Deterministic event_id rules

Pick a strategy that guarantees the same lifecycle change always produces the same event_id. A good rule is to compose it from stable business keys:

event_id = sha256(

source + "|" + event_name + "|" + entity_type + "|" + entity_id + "|" + occurred_at

)

For revenue events, include order_id if available. For stage changes, use the CRM stage-change timestamp. The important operational insight is this: never generate a new UUID inside the retry loop. If the sender times out after Meta receives the event, a new ID on retry creates a duplicate conversion.

Lifecycle mapping rules for Meta CAPI and Google Enhanced Conversions

A bridge succeeds or fails on explicit mapping rules. Do not rely on tribal knowledge like MQL means lead or purchase means won. Write the contract.

| CRM lifecycle | Canonical meaning | Meta CAPI | Notes | |

|---|---|---|---|---|

| Lead | New captured lead | Lead (server) | Optional conversion action | Server-only is common. If you also fire Pixel Lead, ensure same event_id. |

| MQL | Marketing qualified | Custom event (for example MQL) | Optional offline conversion | Often not a website action. Keep action_source consistent with how it was created. |

| SQL | Sales qualified | Custom event (for example SQL) | Optional offline conversion | Best for pipeline attribution. Keep volume low and definitions stable. |

| Won | Closed won revenue | Purchase | Enhanced Conversions join by order_id | Include value and currency. order_id should match what you want as the revenue key. |

| Refund | Revenue reversed or reduced | Purchase with negative value or a defined refund event (implementation-dependent) | Conversion adjustment retraction or restatement | Google supports retraction and restatement. Use order_id to target the original conversion. Reference: Import conversion adjustments. |

A decision rule that prevents chaos: only send revenue to ads platforms from one system of record. If Stripe and the CRM can both emit won events you will double-count. Pick one and build a reconciliation job to cross-check rather than dual-sending purchases.

Identity resolution, hashing and consent gating that holds up in production

Match quality drives attribution but privacy and terms drive whether you should send anything at all. If you are also fighting duplicates and dropped records in webhook-to-CRM style flows, see Stop Duplicate Contacts and Dropped Leads With API Integration for Business That Can Take a Hit for patterns that translate directly to ads pipelines.

Normalize then hash

- Email: lowercase, trim whitespace then SHA-256 hash.

- Phone: E.164 normalization then SHA-256 hash.

- Names and address: normalize consistently if you choose to send them but do not over-collect.

For Google Enhanced Conversions, an easy bug is packing multiple identifiers into a single UserIdentifier object. Google treats it as a oneof and clears other fields. Mirror the docs pattern and create separate objects per identifier. This is called out directly in the API documentation: Enhanced conversions for web setup.

// IMPORTANT: UserIdentifier.identifier is a oneof.

// Set only ONE of hashed_email, hashed_phone_number, address_info, etc.

// Setting more than one will clear the others.

Consent gating approach

Do not treat consent as a UI checkbox. Treat it as a field in the canonical event record that controls delivery. Practically, that means:

- At ingestion time, resolve consent_status from your consent platform, CRM properties or customer data terms state.

- If consent is denied or unknown, store the event but mark it blocked and do not send PII-based identifiers.

- Keep an audit trail: when consent was captured and what policy decision was applied.

For Google Enhanced Conversions for web, you also need organizational readiness. The Google Ads API exposes whether customer data terms were accepted (conversion_tracking_setting.accepted_customer_data_terms). Many teams skip this pre-flight check then wonder why uploads fail or get rejected.

Reference payloads for Meta CAPI and Google Enhanced Conversions

Below are minimal examples that align with the canonical event model. Treat them as a starting spec and validate against your account setup.

Meta CAPI Purchase payload (server-side)

POST https://graph.facebook.com/v20.0/{pixel_id}/events

{

"data": [

{

"event_name": "Purchase",

"event_time": 1735689600,

"event_id": "6b0c6a...",

"action_source": "system_generated",

"user_data": {

"em": [""],

"ph": [""],

"client_ip_address": "203.0.113.10",

"client_user_agent": "Mozilla/5.0 ...",

"fbp": "fb.1.1712345678.1234567890",

"fbc": "fb.1.1712345678.AbCdEf..."

},

"custom_data": {

"currency": "USD",

"value": 199.00,

"order_id": "INV-100291"

}

}

]

}

If you also fire Purchase from the browser, the Pixel event must use the same event_id to dedupe with CAPI. This is a common failure pattern: the browser uses one eventID and the server generates another so Meta counts two purchases.

Google Enhanced Conversions for web (order_id join key)

Enhanced Conversions for web is often a two-step join. At tag time the site sends the click identifier and order_id. Later, you upload hashed identifiers tied to that same order_id via API. The docs emphasize sending first-party data quickly, commonly within 24 hours. Reference: Enhanced conversions for web setup.

UploadClickConversionsRequest (conceptual)

{

"customer_id": "1234567890",

"partial_failure": true,

"conversions": [

{

"conversion_action": "customers/1234567890/conversionActions/987654321",

"order_id": "INV-100291",

"conversion_date_time": "2026-04-17 12:34:56-05:00",

"user_identifiers": [

{"hashed_email": "", "user_identifier_source": "FIRST_PARTY"},

{"hashed_phone_number": "", "user_identifier_source": "FIRST_PARTY"}

]

}

]

}

Refund handling in Google (retraction vs restatement)

Refunds should not be an afterthought. Model them as first-class events so revenue reporting stays truthful.

- Full refund: use a retraction adjustment so the original conversion is removed.

- Partial refund or corrected value: use a restatement adjustment with the updated value.

- Wrong conversion action mapping: retract the original conversion then upload a new conversion under the correct action. Google notes you cannot change the conversion action via adjustment.

Google commonly requires order_id for webpage conversions and for adjustments when the original conversion had an order_id. Reference: Import conversion adjustments.

Operational hardening for retries, timeouts and rate limits

Ad APIs will rate-limit. Networks will timeout. Your job is to make those realities boring.

Retry strategy with idempotency

- Always write the canonical event record first then attempt delivery. If the sender crashes mid-call you can safely retry.

- Use exponential backoff with jitter and a max attempts threshold per destination.

- Preserve event_id across every attempt and keep order_id stable.

- Batch requests to reduce quota pressure.

- For Google uploads and adjustments set partial_failure = true so one bad row does not fail the whole batch.

Dead-letter queue and replay rules

A dead-letter queue is where events go when they are not safe to retry automatically, for example schema validation failures, missing required fields, consent problems or persistent platform errors like CONVERSION_NOT_FOUND. The point is not to drop them. The point is to hold them for a human fix and then replay without duplication.

We recommend logging minimally useful fields so you can trace any attribution discrepancy in minutes:

Log fields:

- event_id

- order_id or entity_id

- destination (meta, google_ec, google_adjustment)

- attempt_number

- request_time and response_time

- error_code and error_message

- result (sent, skipped_duplicate, blocked_consent, dead_letter)

Metrics:

- retry_rate

- error_rate

- queue_lag

- dead_letter_size

This is also where n8n shines for teams that want a visual workflow plus strong operational control. We often pair n8n for orchestration with a small service for hashing, signing and strict schema validation so the workflow stays readable while the core logic stays testable. For a concrete CRM-centered reference architecture with queues, canonical mapping, retries, idempotency, monitoring and rollback, see API Integration for Enterprises: Secure, Scalable Middleware That Keeps CRM, ERP and Support in Sync.

Validation and launch checklist to prevent duplicate or misattributed revenue

Use this checklist during implementation and again after any CRM pipeline change.

- For any event sent both browser and server verify event_name and event_id match exactly for Meta.

- Verify event_id stays the same across retries and replays by inspecting delivery logs.

- Confirm won events always have order_id, value and currency before they enter the delivery queue.

- For Google Enhanced Conversions verify you create separate UserIdentifier objects per identifier field.

- Turn on partial_failure for Google uploads and store per-row errors with the canonical event record.

- Test refund flows using retraction and restatement and confirm reporting changes as expected.

- Confirm consent gating blocks sending hashed identifiers when consent is denied or unknown.

- Run a backfill dry-run in a sandbox or using test event tooling before enabling production sends.

If you want ThinkBot Agency to review your current setup or build the bridge end-to-end, book a consultation. We will map your CRM lifecycle, define the event contract and implement dedupe, consent and replay so your attribution is trustworthy.

To see the kinds of automation and integration work we ship in production, you can also review our portfolio.

FAQ

Common follow-ups we get when teams move from pixels to a CRM-driven conversion pipeline.

Do we still need the Meta Pixel if we use Meta CAPI?

Often yes. Pixel plus CAPI gives redundancy and higher match quality but only if you dedupe correctly by sending the same event_name and event_id for overlapping events. If you cannot guarantee shared IDs, send server-only for those events to avoid double counting.

What should we use as event_id and order_id if our CRM does not have a clean order number?

Use deterministic IDs. order_id should be a stable revenue key like invoice_id, payment_id or a generated value stored back onto the opportunity at the moment of won. event_id should be derived from stable keys such as lifecycle type, entity ID and occurred_at so the same event can be retried without creating a new conversion.

How do we handle refunds or chargebacks so revenue is not overstated?

Store refunds as first-class events. For Google use conversion adjustments with retraction for full refunds or restatement for value changes using order_id to target the original conversion. For Meta, implement a consistent policy for refund events so reporting aligns with how you define revenue in finance and BI.

What is the most common mistake that creates duplicate purchases?

Generating a new event_id on retries or having the browser and server send different IDs for the same purchase. A timeout does not mean Meta or Google did not receive the event so retries must reuse the same idempotency identifiers.

When is this approach not the best fit?

If your business has no CRM stages, no post-purchase revenue changes and a simple checkout with reliable client-side tagging, a full bridge can be unnecessary overhead. In that case, focus on clean tagging and only add server-side delivery for the few events where data loss is provably hurting decision-making.